top of page

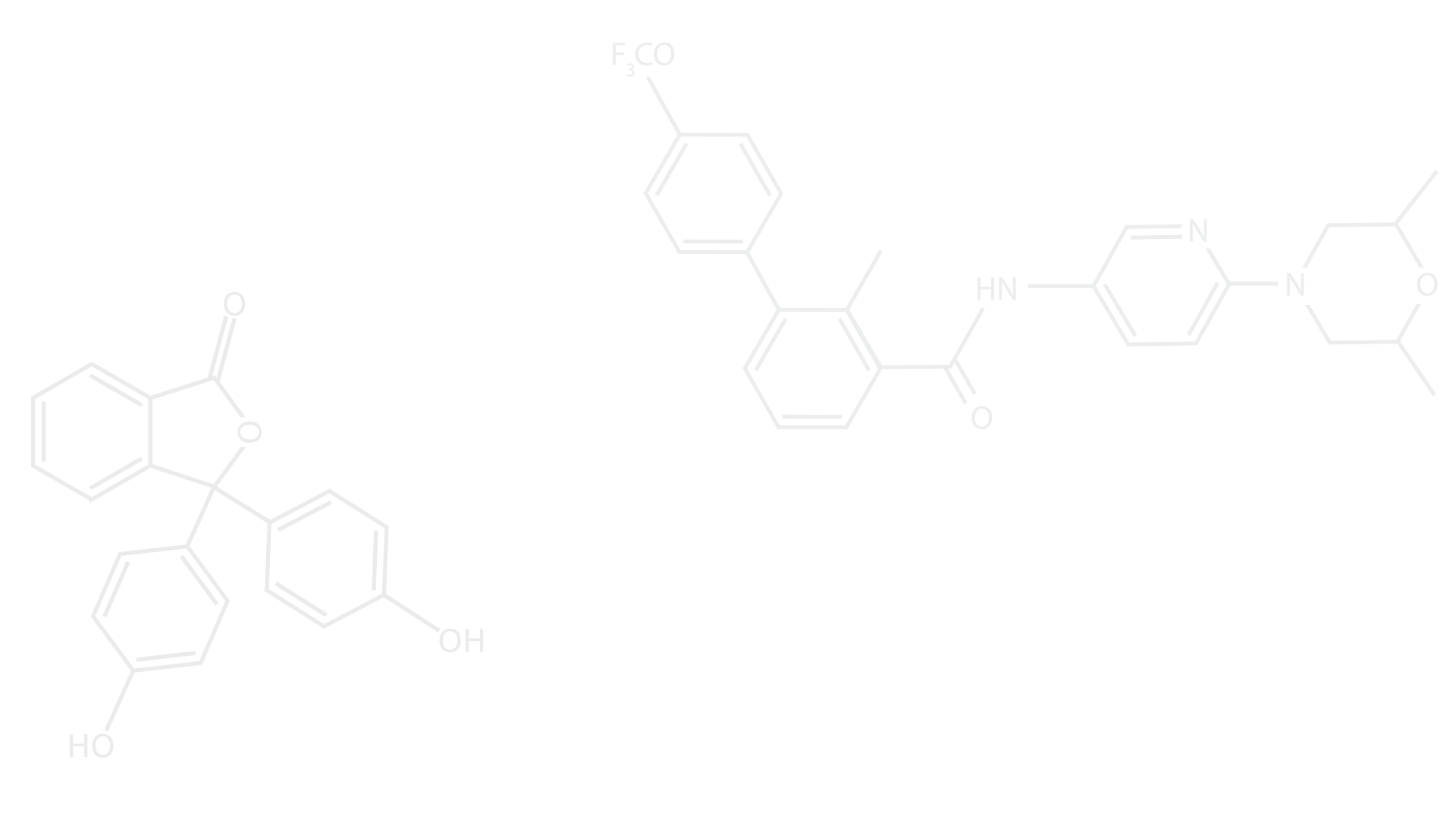

Our group specializes in theoretical and experimental studies of exciting and innovative research fields such as machine learning, extracellular and intracellular stimulations and recordings of neurons in-vitro, synchronization of neural networks and chaotic lasers, physical random number generators and advanced protocols for secure communication.

Recent Publications

Latest videos

Latest videos

Search video...

How do Attention Transformers Work

16:37

Dendritic learning as an alternative to synaptic plasticity - continue

08:58

Multilabel classification outperforms detection-based technique

03:09

The role of delays in brain dynamics

03:30

Physics has mislead neuroscience for over two decades

07:46

Classification with changing number of objects using deep learning

04:29

Advanced confidence AI applications in autonomous vehicles

03:53

How does deep learning work?

04:56

Can the shallow brain compete with deep learning

04:20

Better paths yield better AI

02:22

Unreliable neurons improve brain functionalities and cryptography

03:52

Dendritic learning as an alternative to synaptic plasticity - continue (1)

08:58

bottom of page